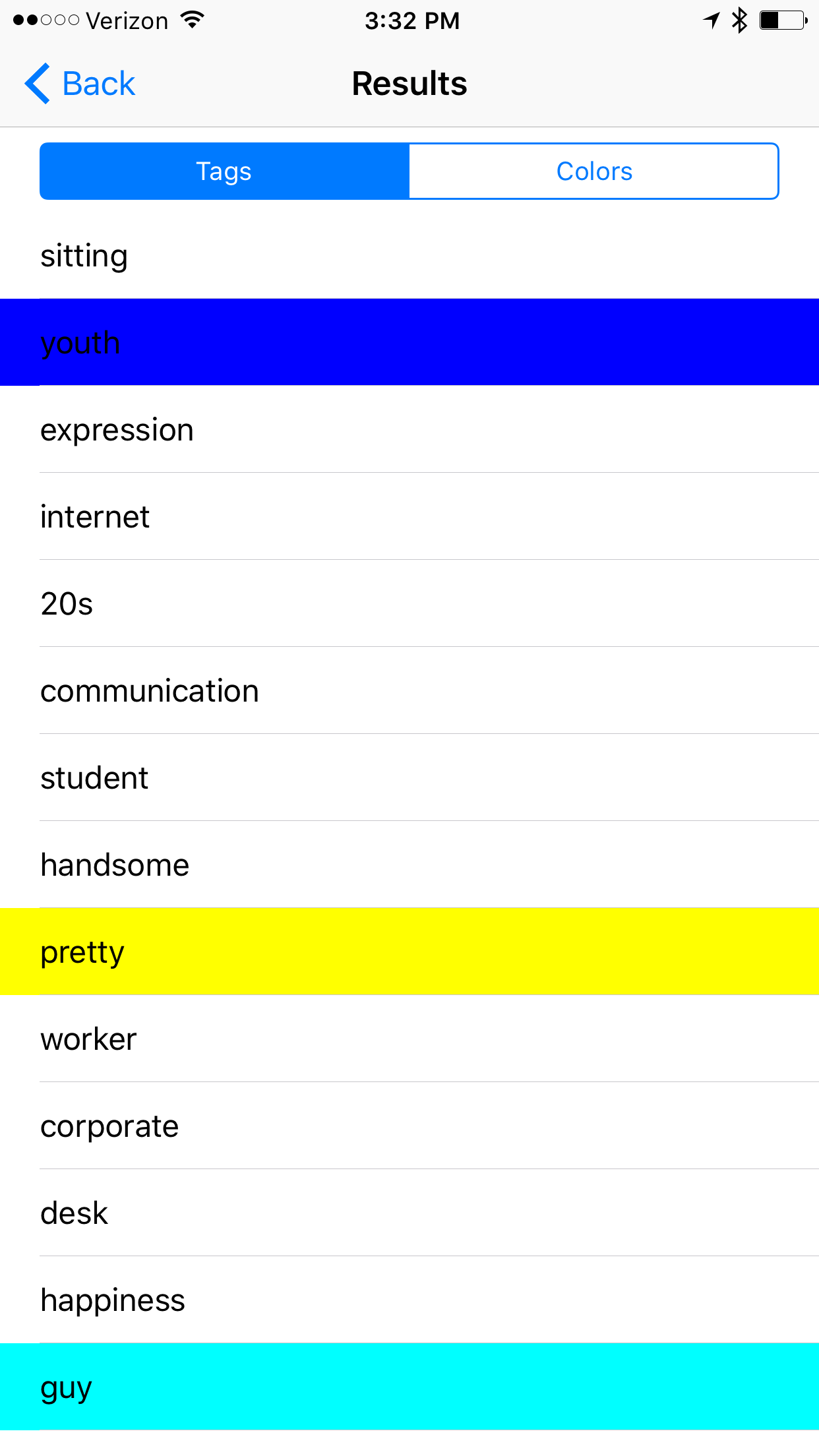

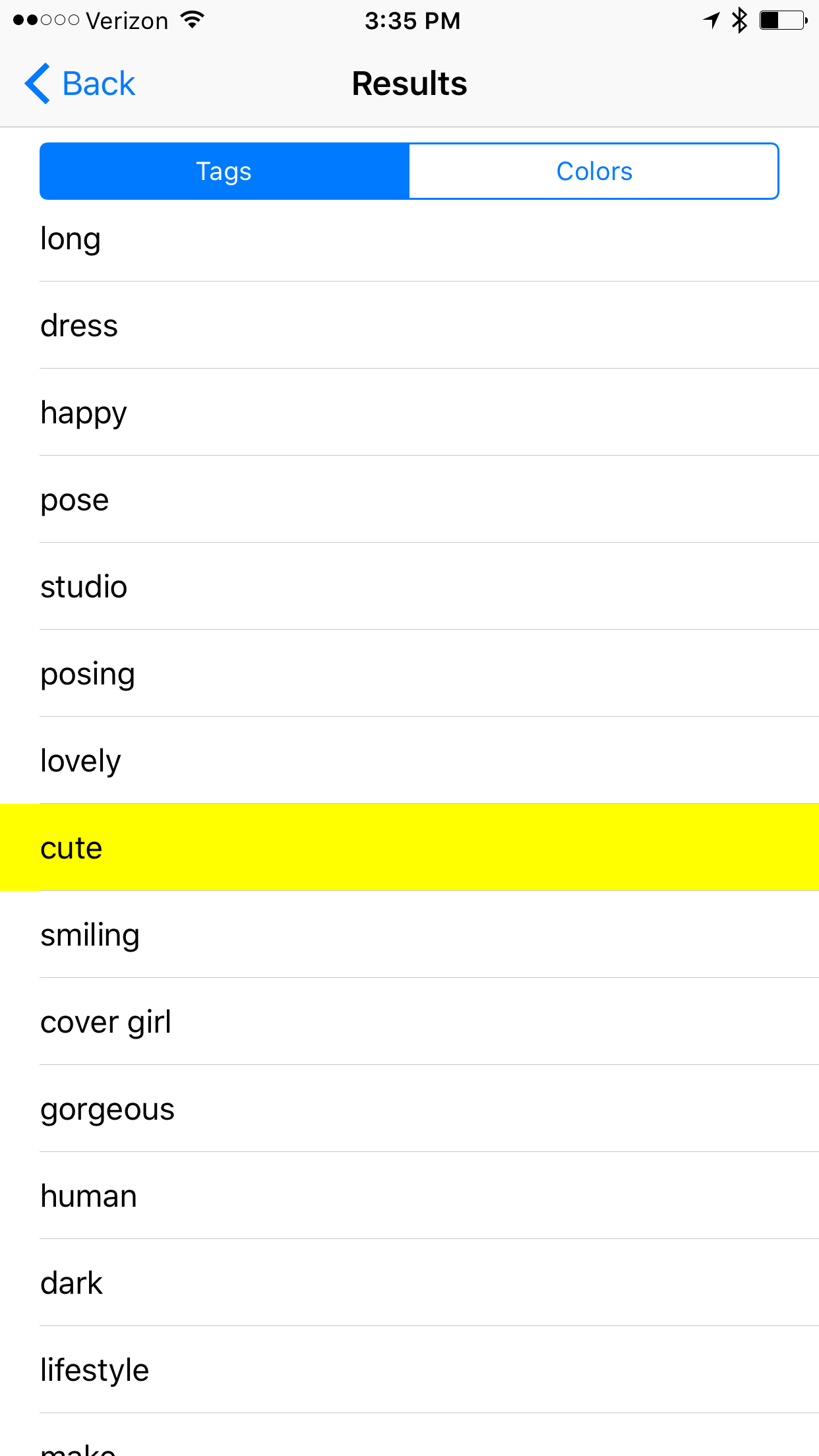

After looking at the successful utilization of these crowd-sourced data sets, what other utility can be drawn from other available viral video data sets?

The ALS Ice Bucket Challenge and the Onset of Hypothermia

The first thing that came to my mind was the ALS Ice Bucket Challenge, in which participants are doused with ice water while there reactions are filmed. This curated data set shares some of the valuable features of the Mannequin challenge, but instead offers us a different avenue of investigation. Can we use data from these videos to detect the symptoms of hypothermia or other temperature induced maladies? There are almost 2 million results when searching for the "Ice Bucket Challenge". We have a remarkable opportunity to use these memes to generate valuable insights into human reactions to stimuli.

Cinnamon Challenge and Respiratory inflammation

I don't advocate anyone give this one a try, but the Cinnamon Challenge had participants attempt to swallow a spoonful of cinnamon which would cause most individuals to violently cough and inevitably inhale fine particles of cinnamon. The individuals experience a high degree of respiratory distress, and once again are captured on camera for us to analyze.

Just looking through the list of viral challenges, a few look like they could provide valuable medical insights and may be worth investigating.

Ghost Pepper Challenge - Irritation/Nausea/Vomiting/Analgesic Reactions

Rotating Corn Challenge - Loose Teeth/Tooth Decay/Gum Disease

Tide Pod Challenge - Poisoning

Kylie Jenner Lip Challenge - Inflammation/Allergic reactions

Car Surfing Challenge - Scrapes/Lacerations/Bruising/Broken Bones/Overall Life Expectancy

What other Challenges can provide insight for us?

References:

Learning Depths of Moving People by Watching Frozen People

https://www.youtube.com/watch?v=fj_fK74y5_0

Moving Camera, Moving People: A Deep Learning Approach to Depth Prediction

https://ai.googleblog.com/2019/05/moving-camera-moving-people-deep.html

You can read "Learning the Depths of Moving People by Watching Frozen People" here:

https://arxiv.org/pdf/1904.11111.pdf

Acknowledgements

The research described in this post was done by Zhengqi Li, Tali Dekel, Forrester Cole, Richard Tucker, Noah Snavely, Ce Liu and Bill Freeman. We would like to thank Miki Rubinstein for his valuable feedback.