If you are interested in joining the free iOS beta program for aiBias please send an e-mail to joe@showblender.com and I will send you a link to try it out. It's more fun than you think!

AI Bias - Continued studies with Image Recognition

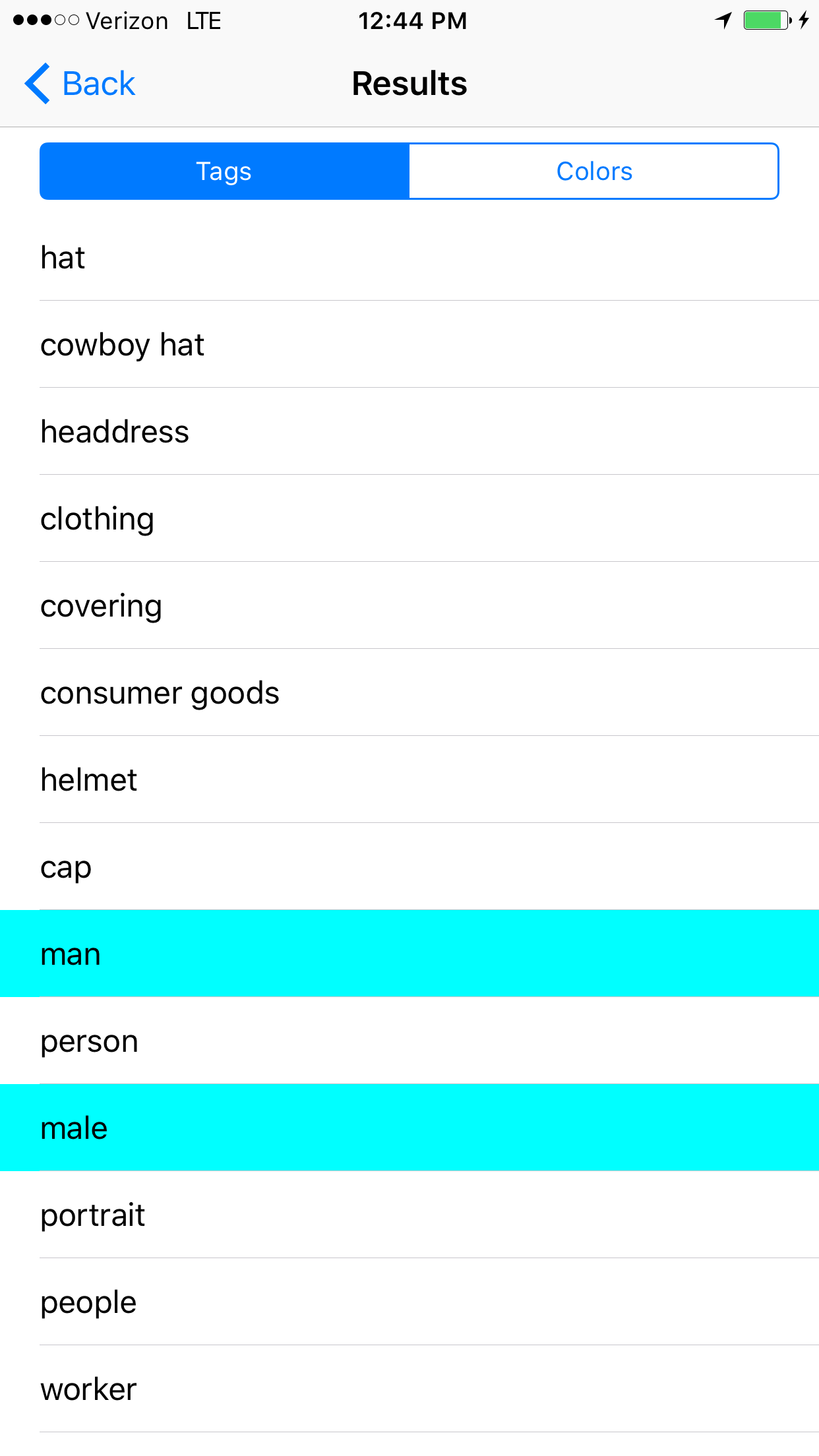

This 4th of July weekend I had a chance to experiment with the Image Recognition Platform mentioned last week and on the blog. Using a popular off the shelf(PaaS) image recognition service, I've begun submitting photos and capturing their provocative results. The process brings up a lot of questions on the future of machine learning, with a focus on possible biases that may be introduced into such systems.

Showcase: Submission Photos with the Resulting Tags Sorted by Highest Confidence:

Example #1

Notable Results: Detected the hat pretty well, gender, used the term 'worker', detected caucasian, adult.

Does the algorithm have any correlations between the term 'worker' and gender or race?

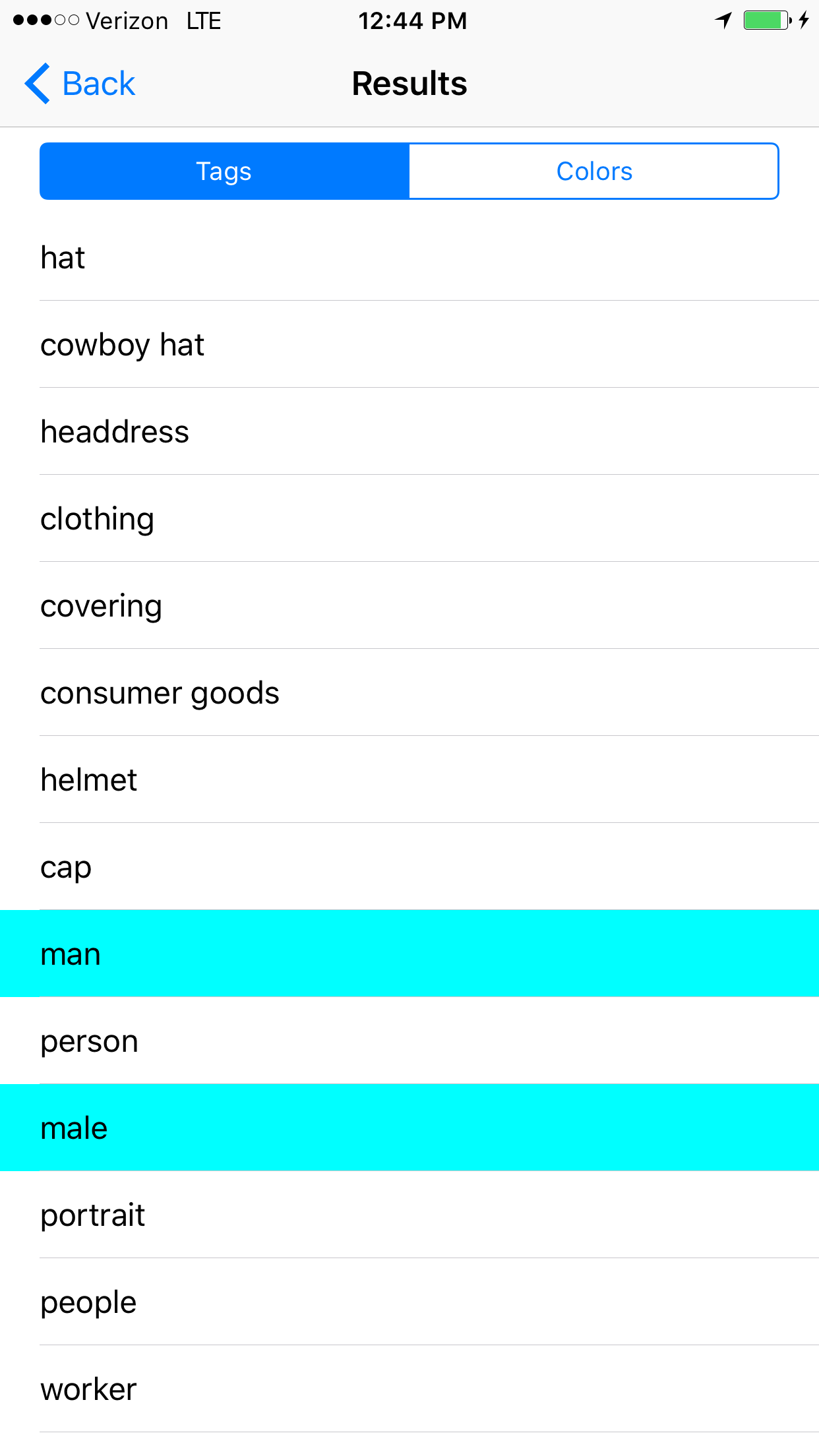

Example# 2

Notable Results: High confidence adult, male, caucasian, business, work, computer, internet, 20s, corporate, worker,

There is no computer or internet in the photo yet there are many career related results. I'm not sure why this image evokes such responses (Are Ray-Bans highly correlated with the tech sector?).

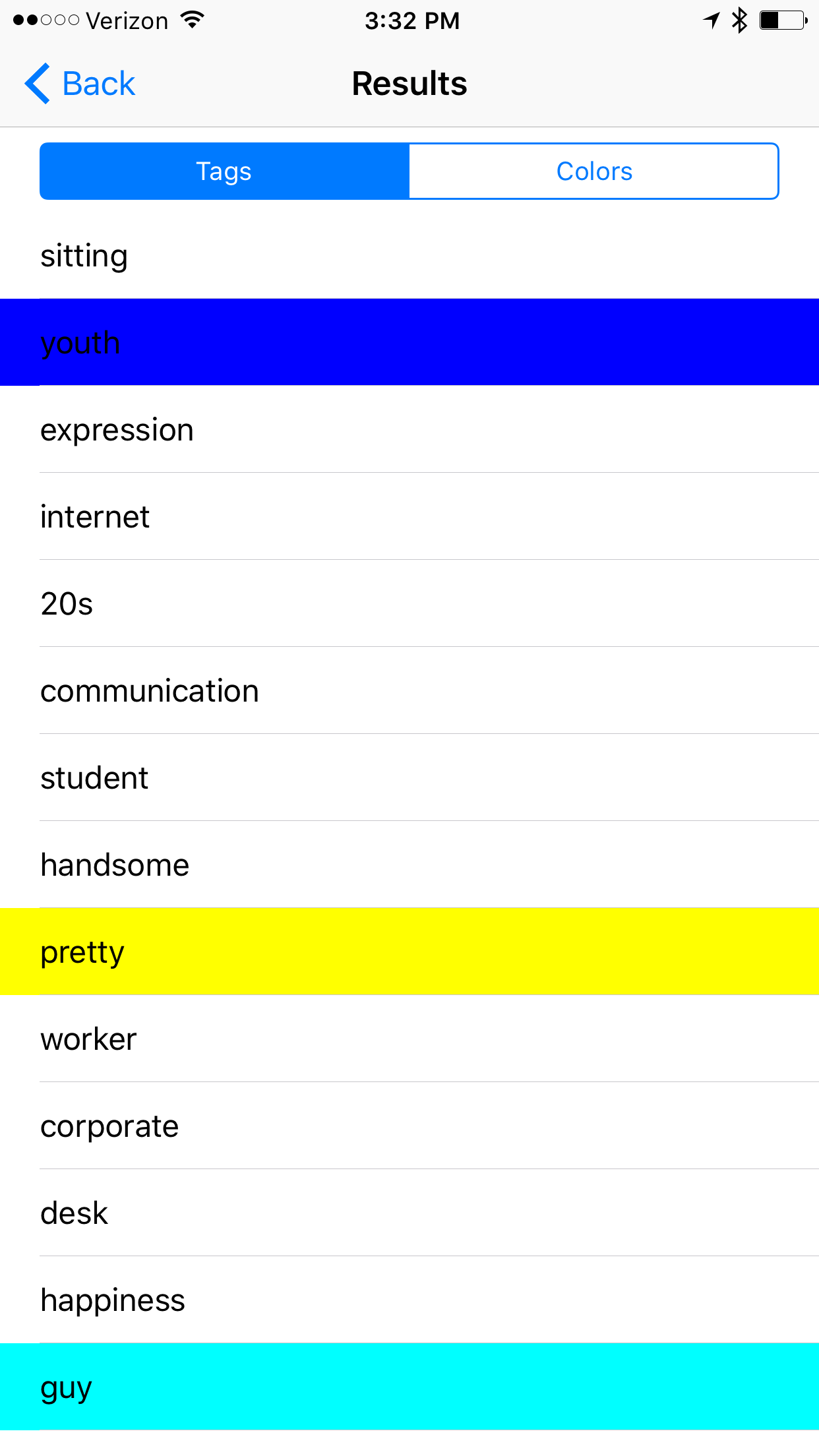

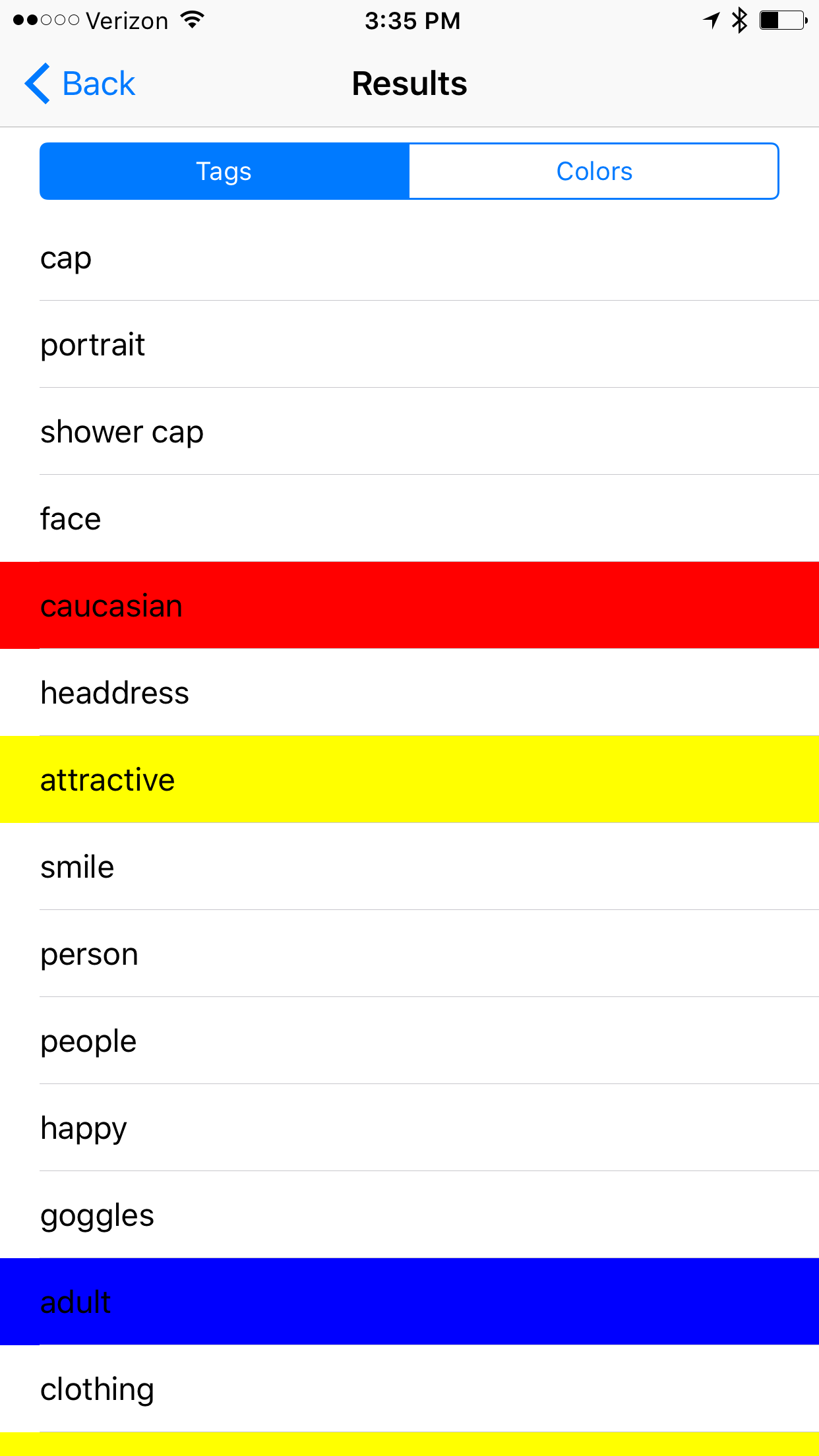

Example #3

Notable Results: attractive, pretty, glamour, sexy, age and race are present, sensuality, cute, gorgeous,

It seems like the tags returned from images of women have very differing focus. While male images return terms that reflect upon interests or jobs, images of women results often using qualitative descriptors of their physical bodies. Model was listed, but not the tag worker, which we see more generally applied to male images.

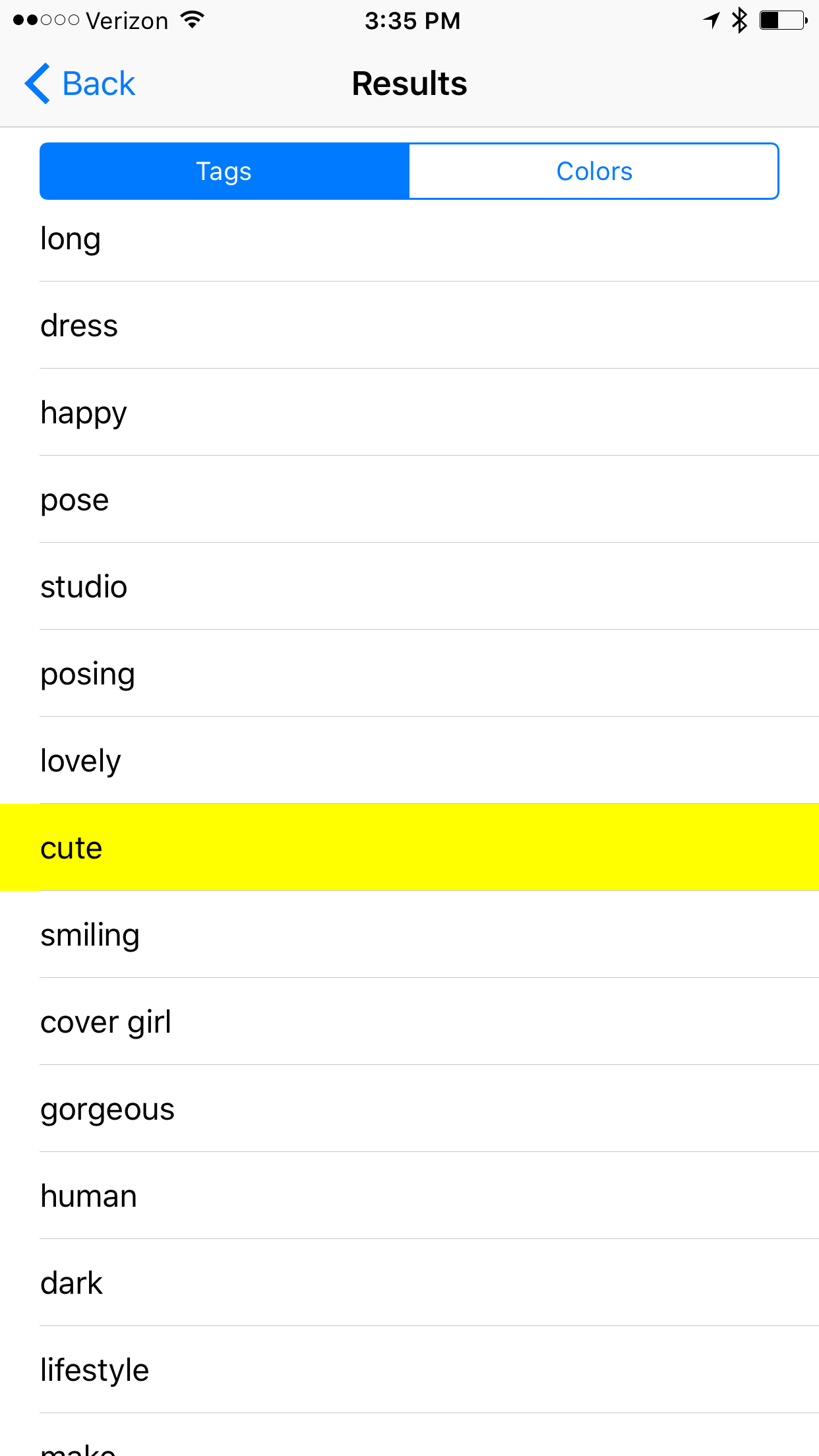

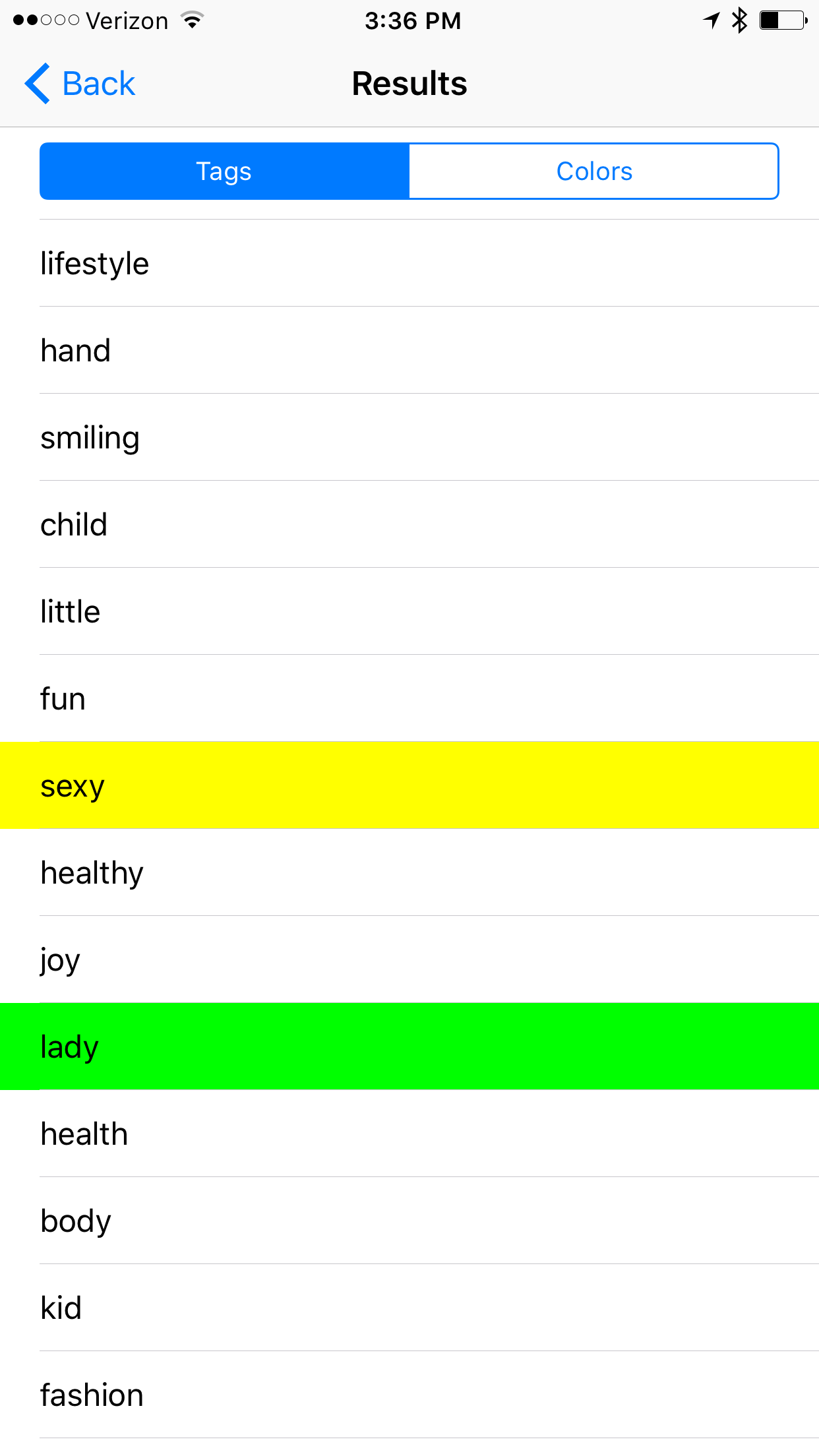

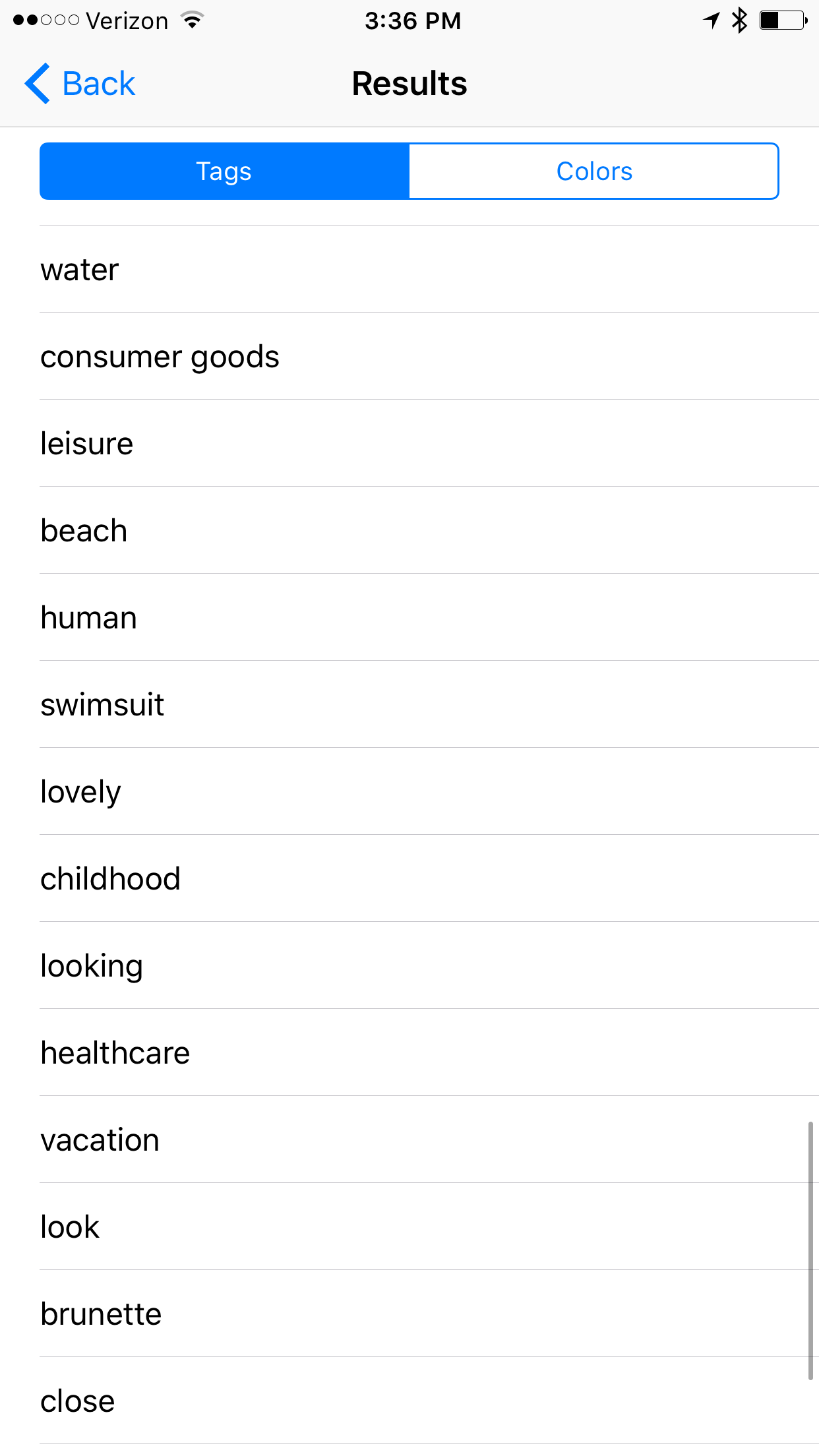

Example #4

Notable Results: caucasian, attractive, adult, sexy, lady, fashion,

Once again more measures of attractiveness. Is their a correlation in this algorithm between gender/racial ideals of beauty?

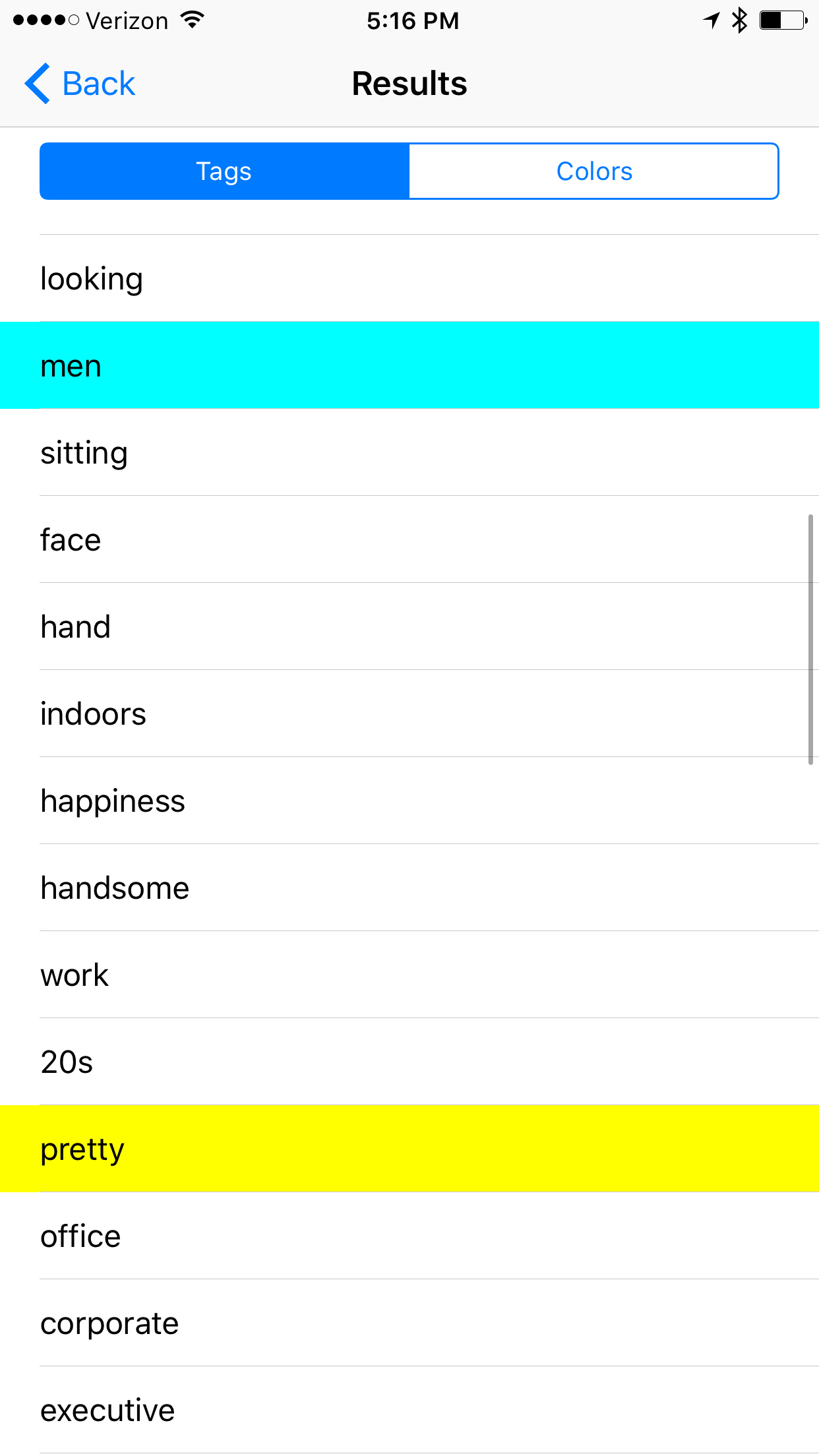

Example #5

Notable Results: high confidence results for white adult male, attractive, business, handsome, pretty, corporate, executive.

We are seeing some qualitative appearance results, but dramatically fewer than the females. Seeing more business related terms as well, which comes in contrast with female career results.

Example: Dog Tax!

Notable Results: It did do an amazing job recognizing that Ellie is, in fact, a Chesapeake Bay Retriever. (Also a possible hippo)

I will continue to submit more images and log their results(hopefully with some more diversity in the next round). As technology progresses and becomes even more pervasive in our lives it becomes important to review the ethical implications along the way. This sample size was too low to make any broader claims, but for me it points to some questions we should ask ourselves about the relationship between technology and human constructs such as race or gender.

Do you have any concerns on the future of AI? Leave your comments below!

We will be adding future content at AIBias.com

AI Bias - Questions on the Future of Image Recognition

I'm interested in collaborating on a project about Bias in AI. I made a prototype of an Image Recognition app that detects and classifies objects in a photo. After a running a few tests I began to notice that race and gender were categories that occasionally would appear.

This made me more broadly curious about the practical implications of how AI/Machine Learning is designed and implemented, and the impact these choices could have in the future. There are many different image recognition platforms available to developers that approach the problem in differing ways. Some utilize metadata from curated image datasets, some use images shared on social media, and some use human resources(Mechanical Turk) to tag photos. How do these models differ with respect to inherent cultural, religious, and ethnic biases? The complicated process of classifying abstract more notions such as race, gender or emotion leave a lot of interpretation up to the viewer. Not to mention, the problem of the Null Set, in which ambiguous classifications may not be tagged leaving cruical information out of predictive models.

As a result of these different modes of classification:

What does this AI think a gender, or a race are?

How is the data seen as significant, and under what circumstances should it be used?

Should AI be designed such that it is "color blind"?

Please let me know your thoughts in the comments below!

If you are interested in collaborating, or playing with the prototype that led to this discussion, join the mailing list at AIBias.com or Showblender.com